Ian Moyse, Cloud Thought Leader

Trust is a big thing – Trust is defined as: choosing to make what’s important to you, vulnerable to the actions of someone else.

Human interactions are finely balanced and many psychological aspects come into play before one can trust another. Even between humans we have levels of trust that vary depending on the consequence and risk of trusting the other party. For example trusting another to make a decision that may cost you 50p in a bet is very different to betting a months salary on their decision. When we choose to trust another the level of confidence is aligned to the consequence of the trust being wrongly placed. We make judgements on how we will trust based upon a breadth of factors such as prior experience (have we seen a pattern of behaviour that makes me trust) and the person’s expertise and credentials in that area (why expert witnesses are called to court cases and carry wright in the Jury’s decision).

We make micro-judgements of whether the gain outweighs the possible pain; is this a trust decision I can make? Familiar with the falling trust test? where one person falls backwards trusting explicitly that another will catch their fall preventing pain. How does one in this situation decide to trust the other and can this be replicated in a machine. Well factors we consider of another human include facial appearance and expressions (in tests 70% of the time a human predicted accurately the predictive outcome from a 100ms exposure to a face). A study from the journal of Neuroscience detected that humans can detect trustworthiness of another in less than 3/100ths of a second.

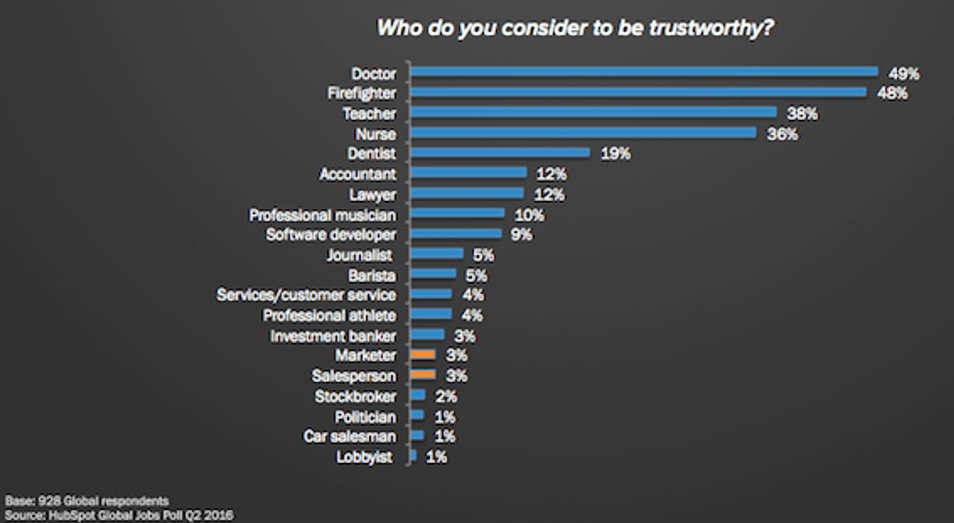

People’s level of trust also changes when knowing the profile, history and/or the profession of an individual. With fundamental decisions being made of that individuals trust worthiness on few details. For example, Doctor’s and Firefighters being highly trusted compared to Salespeople and Politicians. So this raises a fundamental question. If we cannot trust humans all of the time, but we make that decision based on limited metrics such as visual clues and facts, should we and can we trust machines to a greater of lesser degree?

Artificial intelligence is growing at a pace and often we are engaging with it without even realising or consciously relating the interaction to the term AI. Take Alexa, chatbots and smart self-driving cars which are all weaving their way into our everyday lives, whilst these are forms of AI, often the discussion is more around how and when it will be better, not debating the concept of the trust we are explicitly placing in it.

Only 33% of consumers think they’re already using AI platforms. (Source: PEGA)

There is often argument that AI can do no harm and can be trusted as after all it is not a physical form that can bring harm, we control it and its purely a computer program set to do a specific tasks. However a common statement persists that ‘Artificial intelligence (AI) is a set of system development techniques that allow machines to compute actions or knowledge from a set of data. Only other software development techniques can be peers with AI, and since these do not “trust”, no one actually can trust AI’

Asimov’s famous 3 laws of robotics bring context here ;

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

These intimate that this logic be built into every Robot (AI machine) to obey with these at their core and therefore we are safe by definition of this coded ruleset. However, by interpretation, could following these rules mean that in fact trust is not implicit. For example, much debate has been given to autonomous self-driving vehicles and a sample dilemma; If a self-driving car with its occupant is able to crash, to save its occupant rule 1 must invoke action. But to do so means directing the car in direction of a pedestrian on the pavement who shall be killed. Take action and protect the vehicle occupant complies with law 1, protect the external pedestrian does not. Therefore, should the pedestrian trust the AI of the vehicle?

No human can be totally trusted by everyone in every situation, should and will any AI take this honour as its adoption growth and acceptance continues to grow?

- Number of businesses adopting artificial intelligence grew by 270% in 4 years (Source : Gartner)

- 15% of all customer service interactions are expected to be fully powered by AI by end of 2021

Human’s have motivations of achievement, affiliation or power developed through culture and life experiences. These motivations come from biological, personal and social origins and influenced by social approval, acceptance, a need to achieve and the motivation to take or avoid risks.

How does this translate into the machine world where AI is based on its data learnings, pattern matching and assimilations of the most appropriate action to take.

Will humans learn to trust AI over their human counterpart? We are yet to see a machine AI rob a bank for its own motivations or harm someone for its own gain. Certainly we do not see machines making emotional irrational decisions, so are human’s leaning towards trusting AI for the very reason that they are not humanlike?

How much trust are we willing to place in machines and AI? We are already trusting t to drive us in a speeding chunk of metal along a highway with our family in the vehicle. Is it any surprise that we may be wiling to allow AI to guide our financial decisions? After all this is data driven right and the one thing AI machines are empowered to do is process and assimilate vast data quickly and more effectively than a human brain and provide recommendations. We all trust that large financial pivot table to guide us on the company financials, how further step to allow AI to advise us on investments?

Debate on both side prevails, with data driven decisions the mantra of most businesses today however one step further an AI based decisions and we see concerns flare.

- 44% of executives believe artificial intelligence’s most important benefit is providing data that can be used to make data-driven decisions. (Chatbots Magazine

- 76% of CEOs are most concerned with the potential for bias and lack of transparency when it comes to AI adoption (PwC)

So, are we at the dawn of a new AI driven financial world? Well according to a new study by Oracle and personal finance expert Farnoosh Torabi we are, as they found that when it comes to Money people trust AI and robotic advice over their own.

The full report can be accessed here.